(Right) 3D bounding boxes are annotated in the 3D world with detected surfaces and point clouds. Real-world data annotation for 3D object detection.

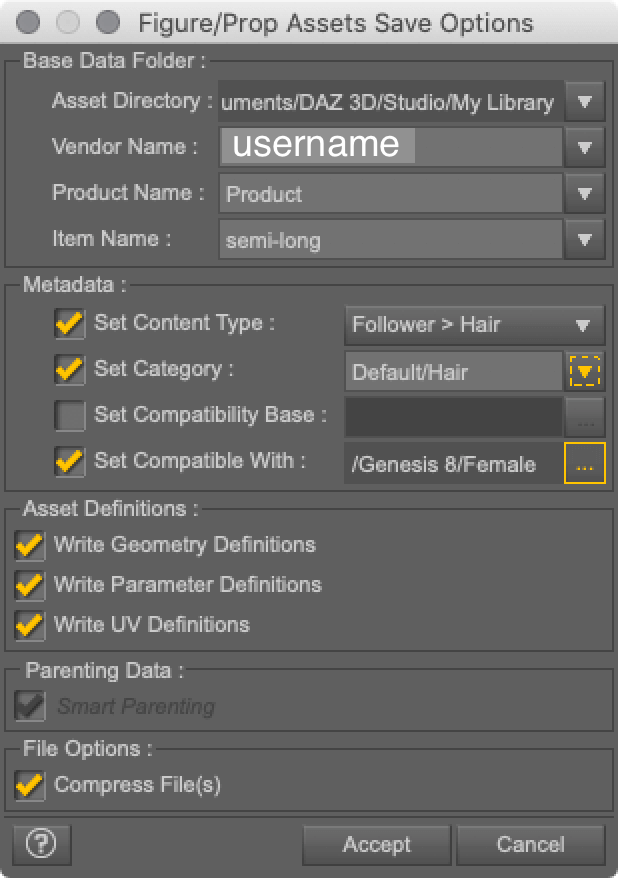

For static objects, we only need to annotate an object in a single frame and propagate its location to all frames using the ground truth camera pose information from the AR session data, which makes the procedure highly efficient.įig 3. Annotators draw 3D bounding boxes in the 3D view, and verify its location by reviewing the projections in 2D video frames. This tool uses a split-screen view to display 2D video frames on which are overlaid 3D bounding boxes on the left, alongside a view showing 3D point clouds, camera positions and detected planes on the right. In order to label ground truth data, we built a novel annotation tool for use with AR session data, which allows annotators to quickly label 3D bounding boxes for objects. With the arrival of ARCore and ARKit, hundreds of millions of smartphones now have AR capabilities and the ability to capture additional information during an AR session, including the camera pose, sparse 3D point clouds, estimated lighting, and planar surfaces.

To overcome this problem, we developed a novel data pipeline using mobile augmented reality (AR) session data. While there are ample amounts of 3D data for street scenes, due to the popularity of research into self-driving cars that rely on 3D capture sensors like LIDAR, datasets with ground truth 3D annotations for more granular everyday objects are extremely limited. This site uses Just the Docs, a documentation theme for Jekyll.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed